FAQ 🙋♀️

What types of tasks do Superwise support?

Superwise supports any tasks that involve tabular data. This includes supervised and non-supervised learning, classification, regression, and more. We currently do not support array datatypes, so recommendations and embeddings are also not supported.

Do you provide out-of-the-box metrics? If yes, what metrics do you provide?

Superwise provides a list of out-of-the-box metrics, including everything from min and max values to mean and standard deviation, alongside distribution shifts and different kinds of drift. You can find the entire collection on the Metrics page by clicking the Metrics button. Read more here to get additional information and find out how to create your metrics!

Do I have to send labels to monitor my model?

Labels enable monitoring metrics such as performance metrics, but other metrics, such as drift, missing values, mean value, and many more, are available for you to monitor even if you don't send label data. for more information [Read here](doc: metric)

How do you calculate drift?

You can read our Drift metrics documentation to understand how Superwise calculates the drift metrics.

Do I have to set a threshold for every metric if I want to get automatic alerts?

It's up to you! You can specify the threshold manually, but we also supply automatic time series anomaly detection. This detection learns the predicted values based on historical data and will alert you when something goes wrong. Learn more…

What is an automatic threshold and how is it set?

Superwise can automatically infer thresholds using a heuristic that predicts anomalies based on values at the +-99 percentiles on each side of the distribution, with seasonal-based control limits. Learn more…

Can I send historical data to Superwise?

Yes, as long as there is no data of any other version logged into Superwise during these dates.

What is the data retention policy?

We currently don't save raw data but we do save aggregative data, so there is no retention policy.

If I create a new monitoring policy, when does it start running?

The new monitoring policy will begin running according to what you specified when you created it. By default, all policies run every day at 4 pm.

You can also run it immediately by using the API Rout: POST /monitor/v1/policy/{policy_id}/execute

Link to API docs

Can I override or modify a prediction after it was sent?

No, this is not possible. Once you send a prediction to Superwise you can no longer modify the data.

How can I close/resolve an incident?

Every incident is automatically closed once its measured values go back to being within the control limits.

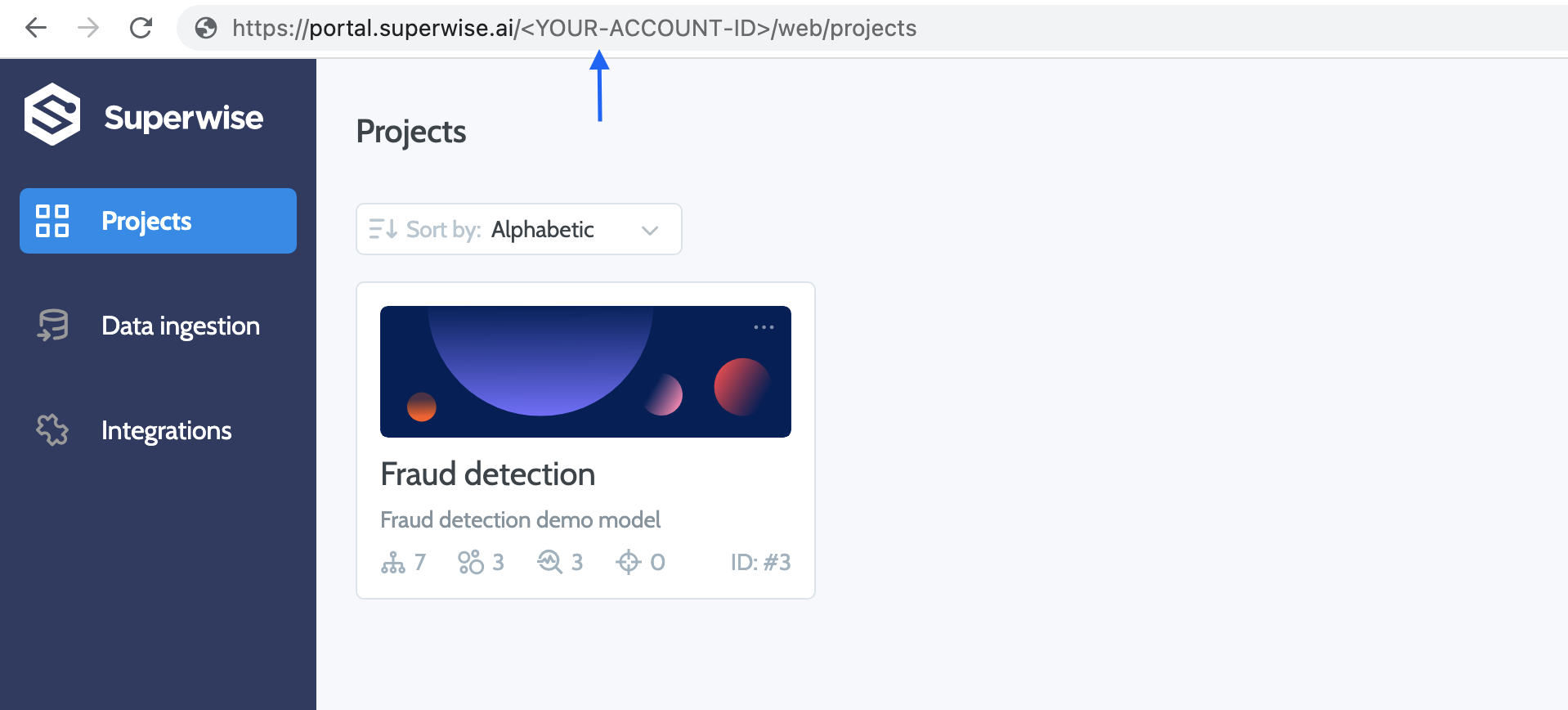

In the API - what should i put as customer ID ?

How do I create a multi class label distribution drift metric?

In order to have a label drift metric for a multi class label distribution:

- Configure a new drift metric , you will need to choose on which fields you want to compute the drift metric, in your case choose the relevant label field.

- Choose the reference dataset you want to compute drift from

- Our distribution distance metric knows how to compute distance between two distributions for numeric or categorical (binary or not) fields.

How do I create a proxy performance metric?

You can actually do this in two ways. Either overwrite the previous proxy label performance metric each time until the real label arrives or save multiple proxy and label performance metrics side by side.

Updated over 2 years ago